You’re now ready to turn attention to some major local business assets: the website and listings. As you continue the work of drafting a plan of action to meet your goals, you need to assess whether:

- The website is free of technical barriers

- The website is locally optimized for the search terms you’ve identified

- The website contains basic best practice pages

- The website has an effective publishing strategy

- The right local business listings are being managed as valuable assets

- All the most visible local business listings are accurate and free of problems

Let’s walk through this, step-by-step.

Begin by surfacing the unique selling proposition (USP)

The website, local business listings, and all forms of marketing must communicate a succinct and compelling message that helps it become known in its market for something the public wants. Inspiration can come from studying the notable USPs of other brands, paying special attention to the attributes on which they hinge. Check out these five examples:

Affinity

“Build the best product, cause no unnecessary harm, use business to inspire and implement solutions to the environmental crisis,” is the aim of Patagonia. This large outdoor outfitter chain has famously aligned its brand with environmental and social causes, giving motivated customers psychological satisfaction in choosing them. They have mastered customer-brand affinity. Emphasize what the customer base for your brand is passionate about.

Uniqueness

“Experience the ancient traditions of the Yurok people and feel at one with the river, ocean, and forest,” is the USP of Redwood Yurok Canoe Tours. The Yurok Tribe’s offering is completely unique – taking customers on guided adventures in traditional canoes in the California redwoods. It’s an experience you can’t get anywhere else in the world. Promote the rarest, most fascinating qualities of the business.

Authority

“Manufacturing the highest quality tents since 1890,” is the proud proclamation of Denver Tent . Customers looking for classic canvas tents made in the USA are able to feel trust in a business that’s been making a product for well over 100 years. It’s authority that’s the selling point here. Market the longevity of long-established clients, and for newer businesses, market any exceptional expertise offered.

Quality

“Where the world’s finest wood meets genuine craftsmanship,” is the USP of Gillis Canes. Hikers seeking a luxury walking stick are bound to encounter this small brand based in Chittenango, NY offering handmade, custom pieces. Here, quality is winning the day. If you can truly back up claims of quality with an exceptional product or service, go strong on messaging surrounding this.

Convenience

“Enjoy breakfast and lunch lakeside,” is the short and sweet claim to fame of Deer Valley Grocery. Sometimes, location is all it takes to make even a small, independent business locally famous, like this grocery store with its natural setting next to a renowned snow park. Here, proximity and convenience are the biggest draws, and for some local brands, these attributes are top of mind for specific customer needs.

If you can take all that you’ve learned in the research phases of chapters 1 & 2, and pair it with the most compelling USP, you are ready to analyze whether the website and local business listings are sending the right message to the right audience.

Next, check for basic pages and contact information on the website

Nearly every local business website should contain the following basic pages:

- Home

- Contact us page with NAP (name, address, phone number) up top

- About

- Reviews/testimonials

- Location landing pages (for multi-location businesses)

- A unique page for every major product/service

- A unique page for each practitioner in a multi-practitioner model

- Sitemap

- A page for policies, disclaimers, guarantees, terms of service, etc.

A website may have dozens or even thousands of pages, but be sure none of these basics have been overlooked.

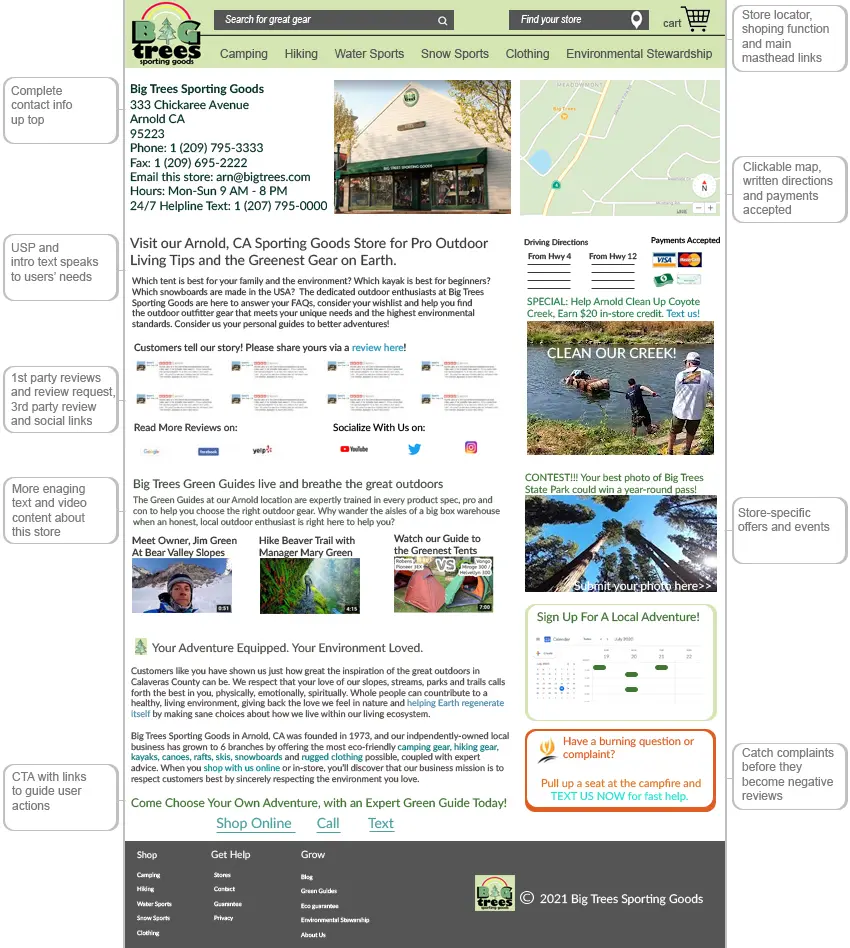

Next, do a careful audit of any location landing pages for multi-location businesses

Every unique, public-facing location of a local business should have its own page on your website. The core function of a location landing page is to ensure that a visitor clicking into the page from a local business listing, organic search result, third party site, or another page of the brand’s website finds everything they need about a specific branch to take the next step.

Use this mockup of a location landing page for a fictitious business when auditing existing pages, or when creating a plan to develop new ones. Effective location landing pages will contain some or all of the following elements:

FAQs about location landing pages

Q: What should I put on these pages?

A: Prioritize content that will be most helpful to customers. Use multiple media — text, images, videos — to tell a story about what customers need and can expect from a particular branch, and heavily localize each page with proof of the brand’s community involvement.

Q: Should service area businesses build location landing pages?

A: Traditionally, location landing pages represent distinct addresses of a business. If you’re marketing a single location business (like a plumbing company) that serves multiple cities, you could consider building landing pages for its service cities. Best advice is to only do so if you can publish fully localized and unique content on each page. If the work being done and the consumer experience is identical from city to city throughout a service area, the pitfall would be lowering the overall quality of your website by producing thin, duplicate pages. Avoid that. But, if the business has unique projects in each city that you can showcase, you may have the makings of some quality landing pages.

Q: I want customers to come to the business from other cities — will location landing pages help?

A: Typically, no. The fact that a business might have customers that come to it from other cities isn’t really the cause for creating a landing page, as this information is likely to be of little interest to readers. The exception to this would be if the business has an interesting relationship with a neighboring city.

For example, let’s say you’re marketing a whitewater rafting guide business. It’s located in Auburn, California, from which guides take customers rafting on the middle fork of the American River. However, guides also meet up with guests in the town of Coloma for trips on the south fork of the American, and for other excursions, they meet up in Somes Bar, California for trips on the Salmon River. The business is legitimately involved in three different towns, offering three different experiences to customers, and has every reason to create unique pages to showcase this.

Another example might be a doctor with a private practice in Town A, hospital privileges in Town B, and who gives lectures in town C. If the relationship with neighboring communities is authentic and interesting, it’s something landing pages can be created for to associate the doctor’s name with a wider geographic region, potentially bringing in patients from farther away.

Q: Should I link to location landing pages from the Google Business Profile listings and other citations?

A: Every multi-location business has to make a decision about whether to link from their local listings to their website homepage or to the landing pages they have built for each branch. Some SEOs recommend linking all listings to the homepage, because it has typically earned the most authority (i.e. the most links) and it has more power to potentially boost Google local pack rankings. Other SEOs recommend linking from the listing to its respective landing page because it’s a better, more direct, immediate user experience (UX), taking the searcher directly to the content they need to see.

You’ll need to look at what your top competitors are doing, according to your analysis, and may eventually need to test whether SEO concerns or UX concerns should have the upper hand in which page you link to from each branch’s local listings.

Q: Who should have a store locator widget?

A: If a business grows to more than five locations, it’s definitely time to consider adding a store locator widget instead of just linking to the location landing pages from the site’s navigation menu. Best practices include being sure that the widget you choose offers a good user experience and doesn’t force the user to find their nearest location via ZIP code (travelers frequently don’t know the ZIP codes of towns they are visiting). Also, because store locator widgets hide location landing pages behind a search function, be certain you’re listing out these pages in the website’s sitemap to ensure search engines crawl and index them.

Q: I’m marketing a ton of locations — how unique does the content really need to be?

A: If you duplicate the content on a website across dozens or hundreds of location landing pages, you aren’t doing your site quality any favors. That being said, large brands demonstrably “get away with” having low quality location landing pages. If Google can index them, they will still rank them, possibly because of the overall authority typical of big brand websites. However, treating these pages as throwaways isn’t good business; it’s a missed opportunity to improve conversions, leads, and revenue.

The smaller the business, the more effort should be put into making its location landing pages best-in-class, in hopes of beating out lazier competitors for a variety of keyword searches. And even big brands with large numbers of locations should strongly consider investing in high quality landing pages for their stores to maximize revenue potentials.

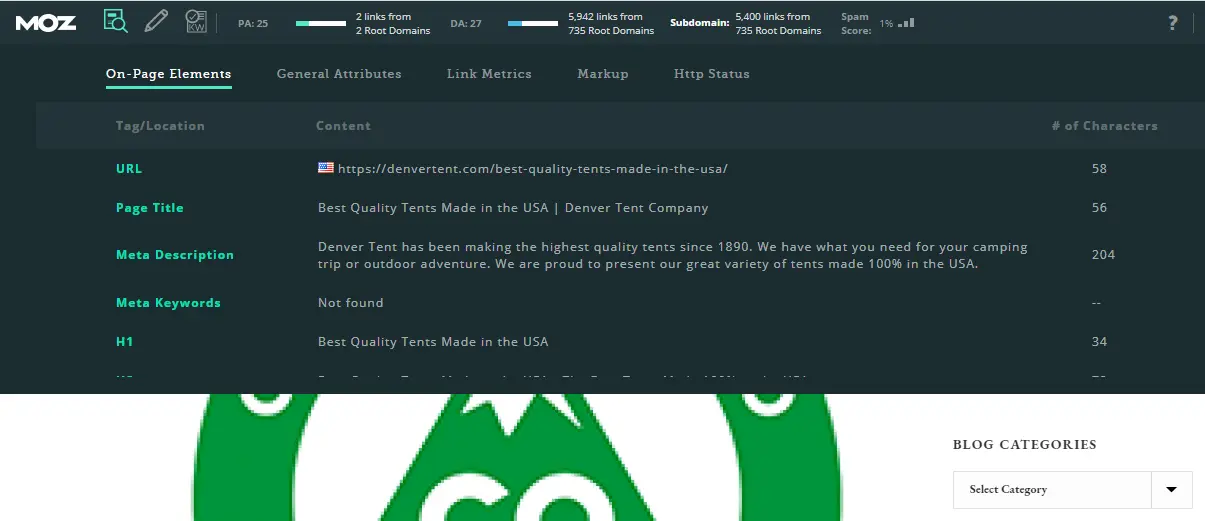

Next, do a technical and SEO audit of the website

Assess each of these basic technical and SEO elements on the website and document your findings:

Domain

The domain name is the “address” of the website. In local search, Google has historically shown a bias towards domain names that exactly match a user’s search language. For example, Google might be swayed that a user searching for an environmentally-friendly tent via the search phrase “eco tent” would consider this domain a relevant result.

If the website has an exact match domain (EMD), it may be a signal in their favor.

HTTPS

Check to be sure the website is using https instead of the older, non-secure http. Google began applying security warnings to websites failing to use the https protocol in 2018.

Subdomain

The main thing to watch out for with subdomains is that they can be the source of canonicalization errors. In simple terms, with improper canonicalization, you can end up with duplicates of website pages instead of a single master page. Read this extensive document on canonicalization and note whether any problems exist for the website.

Subdomains are also part of a long-standing discussion of whether to organize a site’s structure via subdomains or subfolders. For example, should a blog be organized as blog.ecotent.com (a subdomain) or as ecotent.com/blog (a subfolder). Our recommendation is that it’s best to go with subfolders. Make note of anything less than ideal in the structure of subdomains/subfolders.

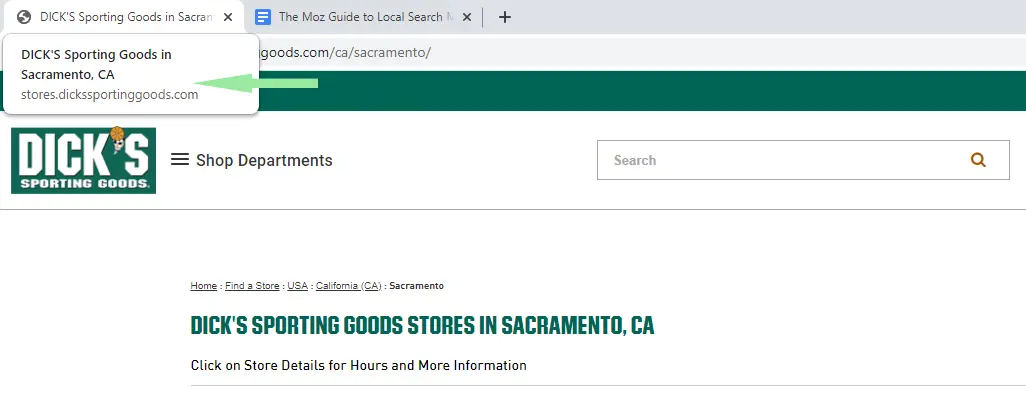

URL

Related to the topic of domains is the concept of optimized URLs. URL stands for Uniform Resource Locator, and you should assess whether the URLs on the website have been locally optimized. For example, if Eco Tents, Inc. has a store in Sacramento, California, the URL for this store might look like:

https://ecotent.com/sacramento…

Meanwhile, their product page for canvas tents might look like:

https://ecotent.com/canvas-ten…

From an SEO standpoint, make sure that the URL reflects the content of the page, and, ideally, contains the most important keywords for that page. Even though Google can understand the local intent of a search term, don’t overlook the importance of including city and other geographic keywords in your URLs if they are relevant to the content of each page.

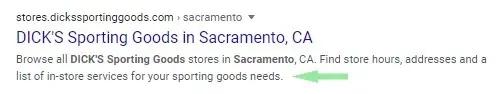

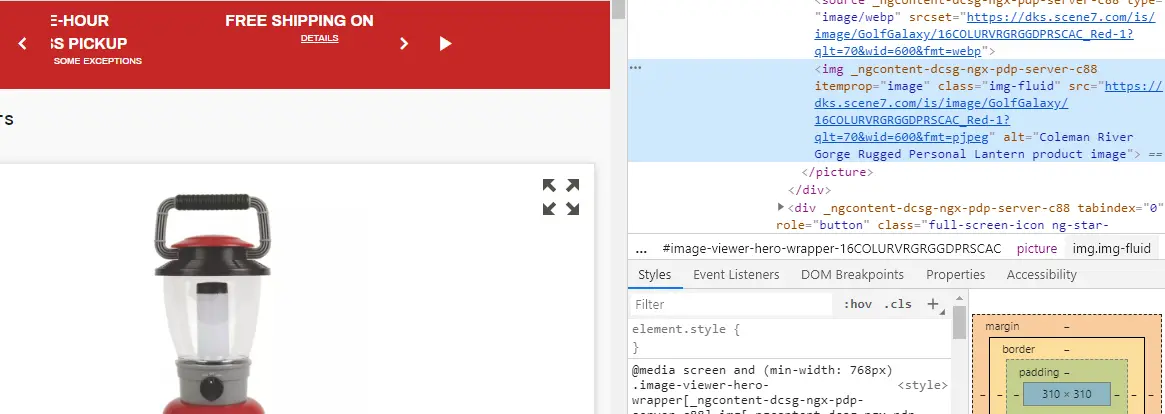

URLs show up in Google’s organic search engine results, and if they are well-optimized with relevant keywords, they can contribute to a user’s decision to click on a result, as in this example of the DICK’s Sporting Goods page for Coleman brand coolers:

NAP

Be sure the company’s name, address, and phone number are accurately represented on the website and are crawlable text — not couched in an image.

Single location businesses can include their NAP in the website’s sitewide header/footer and as the very first element on the Contact page. For a multi-location business, the NAP of each location should be the first element on each location’s landing page. Be sure the NAP is accurate for each location and placed in the appropriate areas of the website.

Title tags

Determine whether an ideal title tag exists for each page of the website. You can see the website title tag of a page by mousing over its browser tab. In this screenshot, the title tag reads “DICK’S Sporting Goods in Sacramento, CA”:

Display length for title tags varies significantly across different devices, and they’re considered one of the most influential SEO elements of any website. Analyze all title tags on the website with these best practices in mind:

- Do they reflect the topic of the page?

- Are they human-readable tags (instead of a jumble of keywords) that will inspire people to click through from the search results to your website?

- Do they include the page’s most important keywords in natural language?

- Are the most important keywords placed toward the beginning of the title tags?

- Are geographic keywords (city in particular) included?

- Is each page’s title tag unique, or are there duplicates?

- How is the length? Some SEOs advocate sticking to around 50-60 characters in title tags, while others experiment with using available space beyond the ellipsis for additional keywords. Analyze based on your own theories drawn from testing.

Use the free MozBar to speed up analysis and to pull in all of a site’s tags at once.

Meta description tags

While meta description tags do not influence rankings, they can influence click-through rates (CTRs) and should be treated as a marketing element of the page. While Google sometimes auto-generates a meta description element for pages, here’s how they typically display them in the SERPs, just below the title tag:

Analyze whether the site’s meta tags are doing all they can to invite clicks and highlight USPs. Google has been experimenting a great deal with the display length of meta descriptions, sometimes showing 300+ characters in the area of the search engine results, but it remains a best practice to write tags that are around 150 characters.

Alt text

Analyze the use of alt text on the website’s key pages. This “alternative” text displays on a page if an image file can’t be loaded, is read by screen readers for visually impaired people, and is read by search engine crawlers to help them better understand the contents and context of an image.

In the raw code of a page, you can see the alt text for this camping lantern written up as “Coleman River Gorge Rugged Personal Lantern product image”:

Assess whether alt text describes the image it represents, contains relevant keywords, but avoids long lists of keywords that don’t read well to a human visitor to the website.

Header tags

Header tags, sometimes called H tags or referred to in descending order as <H1>, <H2>, <H3> all the way down to <H6>, are an HTML coding element often used to designate the heading and subheadings on a page. Just as a newspaper article typically has a heading and subheadings to parse its content up into smaller, readable chunks, your page content should follow a similar structure for ease of reading.

In the past, it was theorized that the <H1> tag (the main heading of the page) heavily influenced organic search engine rankings. Today, its role has been downgraded to one among many useful elements on a page. Here’s how H tags typically look on a page:

Analyze the H tags on all core pages of the site and note any poor practices.

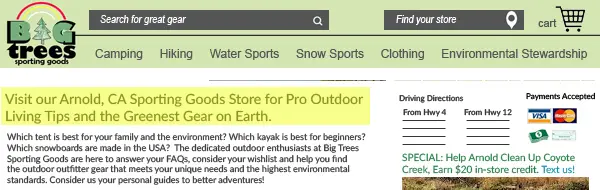

Navigation and internal link structure

In the above screenshot illustrating a header tag, we also see the main navigation menu running horizontally across the masthead area of our website mockup. It contains links to the key sections of the website, such as “Camping”, “Hiking”, and “Water Sports”. Navigation also includes any internal link on the website leading to any other internal page.

Good navigational UX is created by having reliable, consistent menus that users can count on being in the same place across the site, and formatting of links in an easy-to-identify, visual style so that users know what textual elements they can click on.

Pay special attention to whether or not the website has a strong internal link structure, boosting the relevance of key pages. Note down any weaknesses in navigation and internal link structure.

Mobile friendliness

For the past half decade, Google has been urging website owners to prepare for Google treating the mobile version of their website as the version they’ll primarily use for indexing and ranking purposes (you’ll hear this called “mobile-first indexing”).

Use Google’s free Mobile-Friendly Test tool to test this. If a site you’re currently running fails the test, read this tutorial on mobile optimization for troubleshooting tips. Make note of any problems surfaced by the test.

Robots.txt

We’ve reached a very technical leg of our journey in talking about robots.txt. To keep this as short and painless as possible, know that search engines like Google have two main jobs:

-

They crawl the web to find content, moving from link to link and page to page across the Internet.

-

They index the content they find so that they can serve it up to people searching the Internet.

The first thing a search engine is going to look for when they arrive at a website (before starting to crawl/spider it) is the robots.txt file. If the crawler finds a robots.txt file, it “reads” it for directions about how to crawl the site.

If you’re auditing a very small local business website, a robots.txt file may not be essential.

But, any larger website should have a robots.txt file, named robots.txt and no other variant, have it placed in the top-level directory of the website, and be using the file to tell search engines which parts of the website you want indexed and which you don’t, and by which crawlers (called user-agents). If this is unfamiliar territory to you, read this in-depth tutorial on configuring the robots.txt file.

The reason it’s so important to get this right is that a botched robots.txt file can accidentally exclude portions of the website from being properly indexed. This is a good time to run a site indexation report, note down any errors, and discover whether there are problems stemming from the robots.txt file.

HTTP status codes

Every local business website should be properly handling the three-digit HTTP status codes a browser sends to a server when a browser request is made. At minimum, the site should have a 404 error page, like this one:

Should an Internet user or a visitor to your website try to access a page that no longer exists or mis-type a URL, you want them to see a custom-created 404 error page that directs them to take some specific action to keep them on your site. You can see Moz’s 404 error page by typing in moz.com/ and then putting a jumble of letters after the slash.

As you can see, our 404 error page asks the user to type something into a search box to hopefully find whatever they were looking for. Other 404 errors might direct users to a specific page, or offer other customer support options for getting help. The goal is to keep the visitor engaged on the website instead of losing them.

For other scenarios, like redirecting old pages on your website to new ones, or if you move from an old domain to a new one, you’ll need to learn more about other HTTP status codes. As the need arises, please refer to this in-depth documentation on the different codes and protocols to avoid negative outcomes. Make note of any HTTP problems you’ve discovered.

Sitemaps

A sitemap is a resource that lists out all of the pages on a website you would like to have a search engine like Google index. It can also sometimes be used by human visitors who are having trouble finding something on your website (which is a UX problem you need to solve).

Typically, when we talk about sitemaps, we’re talking about two different types: HTML sitemaps and XML sitemaps. The larger your website is, the more appropriate it is to manage both types of sitemaps.

HTML sitemaps are the ones humans can easily read. They sit on your website (commonly linked to from your website footer). Some companies go to great lengths to create really beautiful sitemaps, but in general, most sitemaps look something like this, with a large list of links to all of the key pages on the website:

XML sitemaps are written specifically for search engine bots to crawl. Some website platforms (like WordPress) can include plugins that auto-generate XML sitemaps. Alternatively, you can generate one by entering your website URL into a platform like XML-Sitemaps.com. Doing so is free for websites with 500 or less pages. You can then submit the XML sitemap to Google via these instructions or via Google Search Console. Just remember that submitting a sitemap doesn’t guarantee that Google will index your pages. It’s simply a way of signaling to them that you want them to do so.

The more complex and large the site structure, the more time you should spend assessing whether sitemaps are being used to the business’s best advantage.

Local business data, listed in one place, moves across the Internet via a series of relationships held by three main sources: primary data aggregators, directories, and search engines. A primary data aggregator, like Infogroup, can feed the information they have about a business to a directory like YP.com, and can also feed that data to a search engine like Google. Meanwhile, directories can also feed search engines, but they also feed one another, like Yelp feeding reviews to MapQuest.

Your goal in this three-step dance is three-fold. You want to be sure that all three sources have accurate and complete information about the business so that:

-

Human searchers can discover, interact with, and choose the business.

-

Search engines can discover, evaluate, and rank the business.

-

Good data, instead of wrong information, is being circulated about the business amongst the aggregators, search engines, and directories.

Your goal in auditing the body of structured citations a business has created or acquired is to weed out any problems and provide strategic suggestions. Follow these steps to do so:

Audit Google Business Profile (GBP) first

Devote the most time to auditing the brand’s Google Business Profile listings. Use the extensive key in Chapter 1 of this guide to assess the strengths and weaknesses of each element of the Google Business Profile listing relative to the top competitors you’ve identified.

If the business is managing its listing via Moz Local, you can be confident that duplicate listings are being found and resolved.

Audit the most visible listings second

Search Google for the brand’s name and its most important search phrases, and document any local business listing that’s coming up in the first 3-5 pages of the organic results. These are the citations that will be most visible to customers, forming the core of the brand’s online reputation and possessing the most power to facilitate discovery, traffic, and transactions.

Document whether these most-visible listings are being managed for accuracy and activity (like reviews, third party images, etc.)

Audit other major players quickly

Run each of the business locations through a free citation checker like the Moz Check Your Presence tool. Note down any major sources the tool is saying contain inaccurate or missing data or listings.

Finally, plan your listing management solutions

It’s a rare case when a citation audit doesn’t turn up some problems that must be resolved. You’ll need to make a decision about whether to go with manual or automated location data management, and sometimes you’ll want a combination of both.

The main pro of manual management is that it’s a hands-on experience with direct control of listings. The main con is that it’s labor intensive. Keeping track of all listings in a spreadsheet, manually hunting for duplicates and resolving them, managing changes across all listings when some aspect of the business information changes, and staying alert to incoming reviews and questions is not a small job. The manual workload can quickly drain budgets.

The main pros of automated listings software like Moz Local is that it puts all of your major listings into a single dashboard, automatically detects and resolves harmful duplicate listings, alerts you to incoming reviews and questions, and makes it extremely easy to make changes to your business information whenever necessary.

There are two main cons of automated listings management software. First, you’ll be paying a fee in exchange for the lightening of your manual workload (though for most local businesses, pricing of good software is a reasonable business expense). Second, not all software is of equal value. Some software may sell you listings on large numbers of platforms that have no visibility to human searchers. Others may not include listings on particular platforms that are important to your industry. Carefully evaluate the right product for each business’s needs.

The least-hassle strategy is to use software to cover your major bases, so you’re controlling the bulk of your listings from a single dashboard, enabling you to distribute changes across the location data ecosystem with just a few clicks of your mouse. Moz Local will distribute your data to these major partners in the US, with separate partner sets in the UK and Canada:

- Search Engines: Google, Bing, Apple

- Aggregators: Foursquare, Data Axle (formerly Infogroup), Neustar Localeze

- Directories: Yelp, Facebook, Instagram, YP, Superpages, DexKnows, BrownBook, Judy’s Book, Waze, Uber, Nextdoor, ezLocal, CitySquares, Cylex, Hotfrog, USInfo, ShowMe Local, TomTom, Here, Opendi, Yalwa, iGlobal, Manta, Tupalo, US City, N49, Pages24, Find Open, Whereto, Navmii

- GPS and navigation: Uber, Navmii, Waze, TomTom, HERE

Then, if a particular directory is ranking highly for your business but isn’t included in the software you’re using, manage only that one manually. For example, Moz Local doesn’t currently push data directly to FindLaw.com, and if you’re marketing a legal practice, you’ll likely want to manage a listing on this platform if it’s ranking highly for the brand. You can choose to manage this specialized industry directory manually, but leave the rest to automation.

This approach will ensure you’re not wasting any unnecessary time on cumbersome manual management of listings that you could otherwise be keeping updated in just seconds via software, freeing up that time for other, more creative tasks.